Tennis Serve Dataset & Analysis

A large-scale 3D pose estimation dataset of 5,966 professional tennis serves from broadcast video, enabling motion classification, player identification, and performance prediction using deep learning at unprecedented scale.

Large Scale 3D Pose Estimation Dataset of Professional Tennis Serves

Wang J., Kim E., Ho P., Min S., Kupperman N., Baek S.

Automated pipeline: Object Detection → 2D Pose → 3D Lifting → Tracking → Annotation Matching → Action Classification

Overview

This work presents the first large-scale 3D pose estimation dataset of professional tennis serves, comprising 5,966 serves from 109 players across 113 matches. Using an automated pipeline built on state-of-the-art computer vision models, we extract 3D joint positions and biomechanical joint angles from monocular broadcast video. The resulting dataset enables population-level analysis of serve kinematics, classification of player identity and gender from motion alone, and prediction of serve speed — bridging the gap between controlled laboratory studies and real competition data.

Abstract

Background

The tennis serve is one of the most biomechanically complex movements in sport, yet large-scale kinematic analysis has been limited to small laboratory studies with fewer than 20 participants. Broadcast video offers an untapped source of data from elite competition, but extracting reliable 3D pose information from monocular footage remains challenging.

Purpose

To construct and validate a large-scale dataset of 3D joint positions and biomechanical angles for professional tennis serves extracted from broadcast video, and to demonstrate its utility for population-level kinematic analysis, player classification, and performance prediction.

Methods

We developed an automated pipeline combining RTMDet for object detection, RTMPose for 2D pose estimation, MotionBERT for 3D lifting, and Dynamic Time Warping for temporal action recognition. The pipeline processes monocular broadcast footage to extract per-frame 3D joint positions and derive biomechanical joint angles for 5,966 serves across 109 professional players.

Results

The extracted joint angle trajectories align with published biomechanical literature on serve kinematics. Motion-based classification achieves 97.3% accuracy for gender, 99.2% for player identity, and 84.0% for serve quality. Joint angle derivative features enable serve speed prediction, with shoulder and elbow angular velocities emerging as the strongest predictors.

Conclusions

This dataset bridges the gap between controlled laboratory studies and real-world competition analysis. The scale of data enables population-level insights into serve biomechanics that were previously inaccessible, including the discovery of player-specific kinematic fingerprints and systematic gender differences in joint coordination patterns.

Challenges

- 1Broadcast video has limited camera angles and quality

- 2Fast movements cause motion blur and tracking failures

- 3No existing large-scale annotated tennis pose datasets

- 4Validating results against established biomechanical research

Methodology

Our automated pipeline transforms raw broadcast video into structured biomechanical data through seven sequential stages, each leveraging state-of-the-art deep learning models.

Data Source & Collection

Professional tennis matches were sourced from publicly available broadcast footage covering major tournaments. A total of 113 matches featuring 109 unique players were selected, ensuring diversity across playing styles, surfaces, and competition levels.

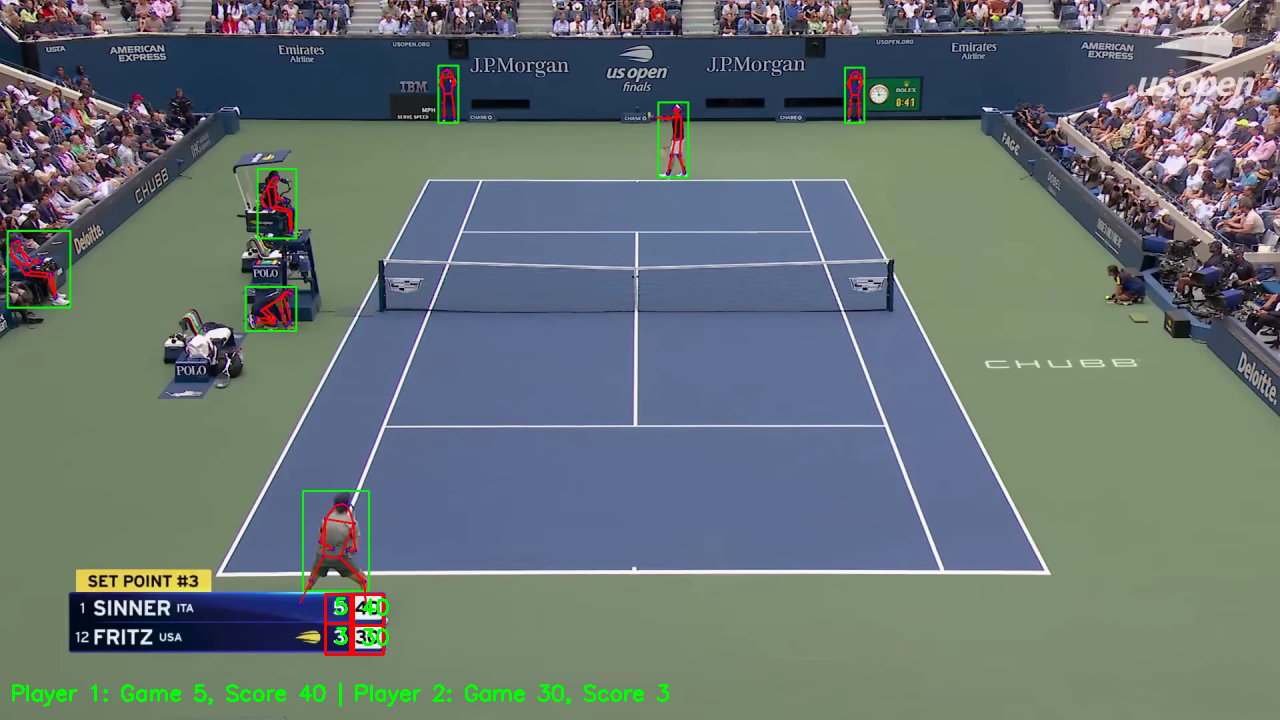

Object Detection (RTMDet)

RTMDet was used to detect and localize players in each frame. The detector provides bounding boxes that are tracked across frames to maintain consistent player identity throughout each point, handling camera switches and occlusions.

2D Pose Estimation (RTMPose)

RTMPose extracts 2D joint positions for each detected player at 30 fps. The model produces 17 keypoints per frame following the COCO skeleton topology, with confidence scores used to filter low-quality detections caused by motion blur or partial occlusion.

3D Pose Lifting (MotionBERT)

MotionBERT lifts 2D joint positions into 3D space using temporal context from surrounding frames. The transformer-based architecture captures motion dynamics to produce anatomically plausible 3D reconstructions from monocular input, outputting metric-scale 3D coordinates.

Action Recognition (DTW)

Dynamic Time Warping aligns detected motion sequences against canonical serve templates to identify and segment individual serves. This temporal alignment handles natural variations in serve speed and rhythm while providing precise phase boundaries for biomechanical analysis.

Annotation Integration

Detected serves are matched with point-level metadata including server identity, score, serve number (first/second), and outcome (ace, fault, in-play). This integration links kinematic data to performance context, enabling analysis of how technique varies with match situation.

Biomechanical Post-Processing

Raw 3D joint positions are converted to biomechanical joint angles using anatomically-defined coordinate systems. Butterworth low-pass filtering removes high-frequency noise, and joint angle derivatives (angular velocities and accelerations) are computed to characterize the dynamics of each serve phase.

Approach

- 1Developed automated pipeline for serve detection and pose extraction

- 2Applied state-of-the-art 2D and 3D pose estimation

- 3Created annotation matching system for temporal alignment

- 4Computed biomechanical metrics matching clinical standards

Results & Demos

Data captured from professional tennis broadcasts

Automated serve detection and tracking

3D pose estimation on tennis serve

Biomechanical analysis visualization

Multi-player pose comparison

Serve phase segmentation and alignment

Findings

Analysis of the dataset reveals consistent biomechanical patterns that align with existing literature while providing new population-level insights enabled by the unprecedented scale of data.

Dataset Overview

The final dataset comprises 5,966 serves from 109 players (54 male, 55 female) across 113 professional matches. Each serve contains per-frame 3D joint positions (17 keypoints), derived biomechanical joint angles for major upper-body joints, and associated metadata. The average serve duration is 1.8 seconds with a mean of 54 frames per serve.

Joint Angle Trajectories

Mean joint angle trajectories for shoulder abduction, shoulder rotation, elbow flexion, and trunk rotation closely match patterns reported in laboratory-based biomechanical studies. Peak shoulder external rotation occurs at approximately 170° during the late cocking phase, consistent with published values of 160-180°. Elbow extension velocity peaks during the acceleration phase, confirming the proximal-to-distal kinetic chain sequence.

Classification Results

Motion-based classification using joint angle derivative features demonstrates that serve kinematics encode substantial information about player identity and characteristics. All classifiers used Random Forest models with 5-fold cross-validation.

| Task | Accuracy | Baseline | Lift |

|---|---|---|---|

| Gender Classification | 97.3% | 50.5% | +46.8% |

| Player Identification | 99.2% | 0.9% | +98.3% |

| Serve Quality (Ace vs Fault) | 84.0% | 50.0% | +34.0% |

Speed Prediction

Joint angle derivatives at key serve phases enable prediction of serve speed. Shoulder internal rotation velocity and elbow extension velocity during the acceleration phase are the strongest individual predictors. A multivariate model combining angular velocities from shoulder, elbow, trunk, and wrist achieves meaningful correlation with recorded serve speeds, confirming the biomechanical relationship between joint angular velocities and ball speed.

Key Outcomes

- Created dataset of 5,966 annotated professional serves from 109 players

- Achieved 97.3% gender classification and 99.2% player identification accuracy from motion alone

- Validated joint angle patterns against published biomechanical literature

- Demonstrated feasibility of large-scale kinematic analysis from broadcast video

Discussion

The results highlight three major implications for sports biomechanics and computer vision research.

Kinematic Fingerprints

The near-perfect player identification accuracy (99.2%) demonstrates that each player's serve possesses a unique kinematic signature — a "motion fingerprint" encoded in the joint angle derivatives. This has implications for player scouting, opponent analysis, and understanding the individuality of motor patterns in elite athletes. The finding suggests that technique is highly individual even at the professional level, where coaching might be expected to produce convergent movement patterns.

Gender Differences in Serve Mechanics

The 97.3% gender classification accuracy reveals systematic biomechanical differences between male and female serves that go beyond simple speed differences. Analysis of feature importance shows that shoulder rotation range, trunk angular velocity, and wrist snap timing contribute most to gender discrimination. These differences likely reflect both anatomical factors and coaching traditions, and warrant further investigation for gender-specific training optimization.

Bridging the Lab-Competition Gap

Traditional biomechanical studies are limited to small samples (typically n < 20) in controlled laboratory settings. This dataset, with 5,966 serves from 109 players in actual competition, enables population-level analysis that captures the full range of elite performance variability. While broadcast-derived data has lower precision than marker-based motion capture, the validation against published literature confirms that the extracted kinematics are accurate enough to support meaningful biomechanical conclusions at scale.