3D Pose-to-Kinematics Mapping

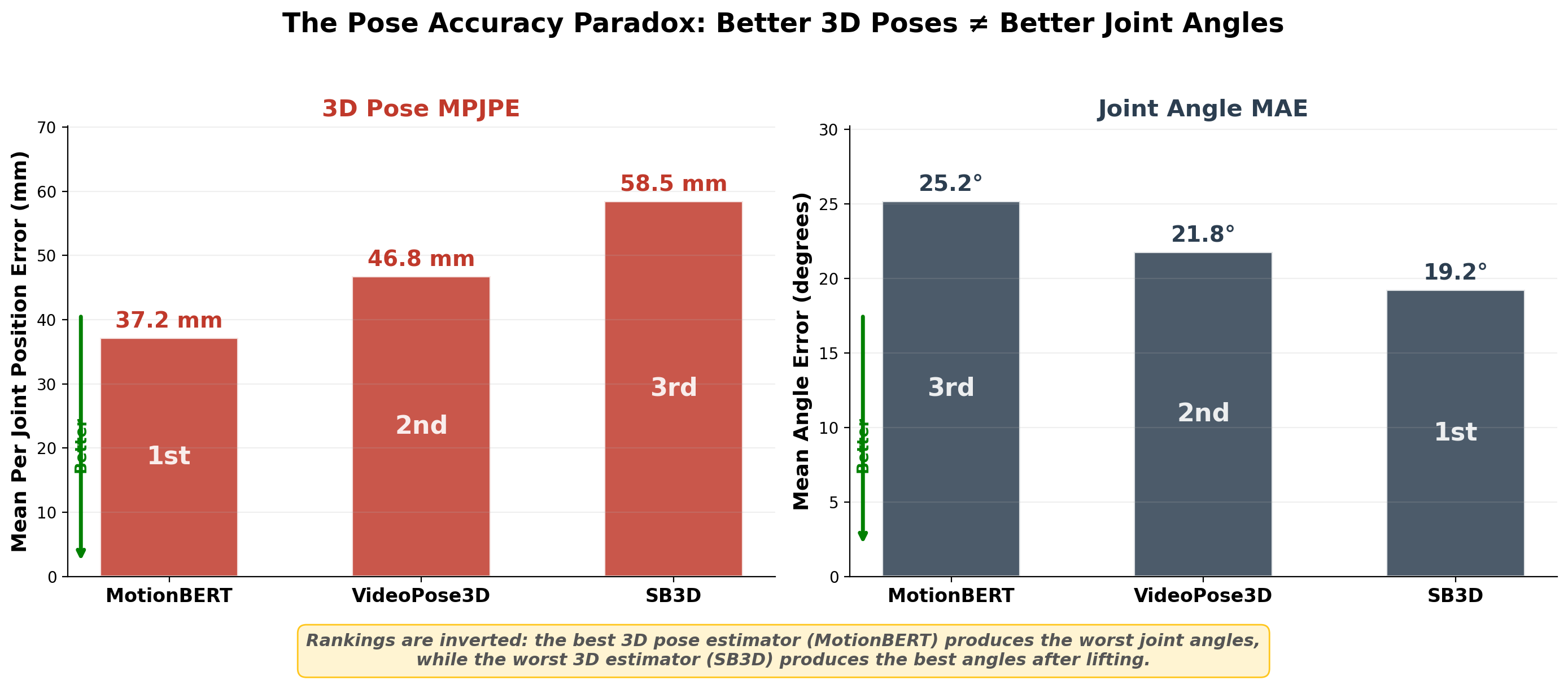

We benchmark three state-of-the-art 3D pose estimation models on biomechanical joint angle accuracy and discover that models with lower MPJPE produce higher joint angle error — challenging a core assumption in the field.

Does Lower MPJPE Mean Better Biomechanics? Evaluating Joint Angle Fidelity of State-of-the-Art 3D Pose Estimation Models

Wang J., Kupperman N., Baek S.

Full pose-to-angle estimation pipeline

Overview

Pose estimation models are universally ranked by Mean Per Joint Position Error (MPJPE), yet biomechanical applications depend on joint angles — not joint positions. We train an 8.4M-parameter residual network to map 3D poses to ISB-standard joint angles at 2.95° MAE, then use it to evaluate three state-of-the-art models (MotionBERT, VideoPose3D, SimpleBaseline3D) on AthletePose3D. The result is paradoxical: MotionBERT, with the lowest MPJPE (37.2 mm), produces the worst joint angle accuracy (25.19°), while SimpleBaseline3D, with the highest MPJPE (58.5 mm), achieves the best (19.21°). This inverse relationship holds per-joint and per-subject, demonstrating that MPJPE is an unreliable proxy for biomechanical fidelity and motivating angle-based evaluation metrics for the field.

Abstract

Background

Practitioners in sports science, rehabilitation, and ergonomics select 3D pose estimation models by MPJPE — the standard spatial accuracy metric. Yet their downstream analyses depend on joint angles, not joint positions. Whether lower MPJPE actually translates to more accurate biomechanical angles has never been systematically tested.

Problem

The mapping from positional error to angular error is nonlinear, especially at multi-axial joints like the hip and shoulder where three rotational degrees of freedom interact. Small positional perturbations can propagate into large angular discrepancies, and the direction of positional error matters more than its magnitude for angle computation. This raises a fundamental question: does lower MPJPE guarantee better joint angles?

Methods

We train an 8.4M-parameter residual network with inverted bottleneck blocks to map bone-relative features (16 bone vectors → 64-dim input) to 12 ISB-standard bilateral joint angles. Ground-truth angles are computed from 142 optical markers using Cardan (hip) and Euler (shoulder) decompositions. The model achieves 2.95° MAE on ground-truth poses and is used to evaluate MotionBERT, VideoPose3D, and SimpleBaseline3D on 996 test clips from AthletePose3D.

Key Finding

The relationship between MPJPE and angle accuracy is inverse. MotionBERT achieves the lowest MPJPE (37.2 mm) but the worst angle MAE (25.19°). SimpleBaseline3D has the highest MPJPE (58.5 mm) but the best angle MAE (19.21°). VideoPose3D falls in between on both metrics (46.8 mm, 21.77°). This paradox holds per-joint and per-subject, ruling out dataset or model-specific artifacts.

Impact

MPJPE is insufficient as a proxy for biomechanical fidelity. We advocate for reporting joint angle accuracy alongside MPJPE in pose estimation benchmarks, and propose the learned pose-to-angle mapping as a lightweight evaluation tool. Future work explores biomechanically-constrained training: L_total = L_pos + λ · L_ang.

Challenges

- 1The field assumes lower MPJPE implies better downstream biomechanics, but this proxy relationship has never been validated

- 2Multi-axial joints (hip, shoulder) have three rotational degrees of freedom where small positional errors propagate nonlinearly into large angular discrepancies

- 3Pose estimation models trained on H36M learn lab-specific priors that may not generalize to athletic movements

- 4No standardized protocol exists for evaluating pose estimation models on biomechanical angle accuracy

Methodology

We evaluate whether MPJPE predicts biomechanical angle accuracy by training a pose-to-angle model on ground-truth data and applying it to three SOTA pose estimators on a real-world athletic dataset.

AthletePose3D Dataset

AthletePose3D contains ~1.2 million frames of real-world athletic motion captured with 142 optical markers at millimeter-level accuracy. We use 996 test clips spanning diverse athletic movements. Unlike H36M's constrained lab environment, AthletePose3D includes the fast, dynamic motions typical of sports biomechanics applications — providing a more realistic evaluation setting.

Ground Truth Angle Computation

Twelve bilateral joint angles are computed from marker trajectories following ISB standards. Hip flexion/extension and abduction/adduction use ZXY Cardan sequences; shoulder flexion/extension and abduction/adduction use YXY Euler sequences; elbow and knee flexion are computed as vector angles between adjacent segments. This yields six angle types × two sides = 12 angles per frame.

Pose-to-Angle Model

Sixteen bone vectors are extracted from the 17-joint skeleton and transformed into 64-dimensional bone-relative features that are invariant to global rotation and translation. These feed into a residual network with 4 inverted bottleneck blocks (512→2048→512), totaling 8.4M parameters. The model achieves 2.95° MAE on ground-truth poses, confirming it as a reliable angle evaluation tool.

3D Pose Estimation Models

Three architecturally distinct models are evaluated: SimpleBaseline3D (fully connected, single-frame, MPJPE 58.5 mm on H36M), VideoPose3D (temporal convolution over 243 frames, 46.8 mm), and MotionBERT (transformer-based dual-stream, 37.2 mm). These span the accuracy–complexity spectrum of current SOTA methods.

Evaluation Protocol

Each model's predicted 3D poses on AthletePose3D are passed through the trained pose-to-angle mapping. Predicted angles are compared against ISB ground truth via Mean Absolute Error (MAE) per joint and per subject. MPJPE values are taken from published H36M benchmarks. This protocol isolates the question: does spatial accuracy (MPJPE) predict angular accuracy?

Approach

- 1Extracted bone-relative features (16 bone vectors → 64-dim) as rotation-invariant input to an 8.4M-parameter residual network with inverted bottleneck blocks

- 2Computed ground-truth angles from 142 optical markers for 12 bilateral ISB-standard angles (hip, shoulder, elbow, knee) using Cardan/Euler decompositions

- 3Evaluated three architecturally distinct SOTA models (MotionBERT, VideoPose3D, SimpleBaseline3D) on AthletePose3D with 996 test clips

- 4Analyzed the MPJPE–angle relationship per-joint and per-subject to establish that the inverse trend is systematic, not artifact

Results & Demos

The pose accuracy paradox: models with lower MPJPE produce higher joint angle error

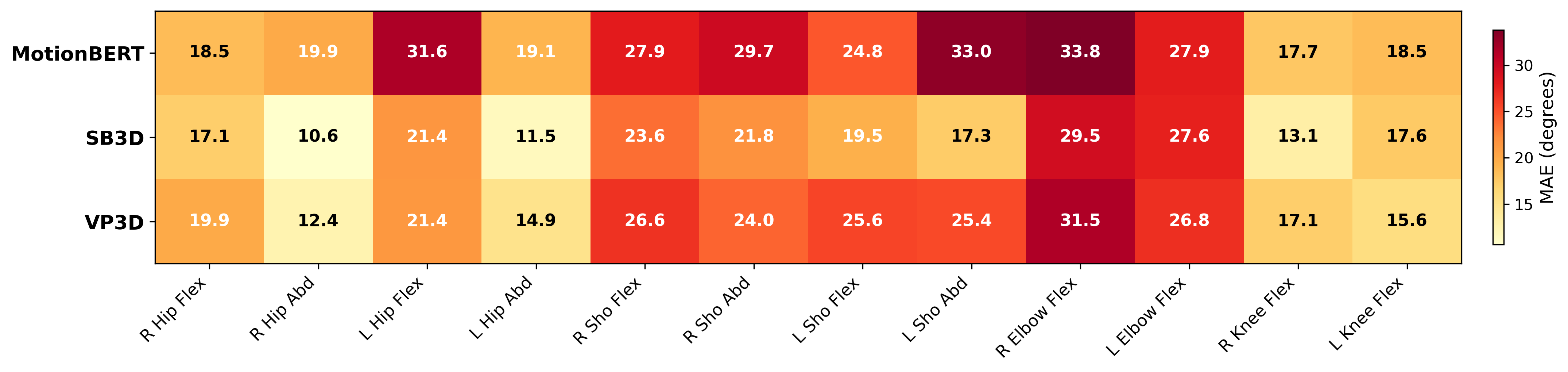

Per-angle MAE (degrees) across three models — inverse trend holds for every joint

Skeleton to joint angle conversion

Joint angle visualization

Comparison with ground truth

Ground truth joint angle overlay

Findings

Our evaluation reveals a paradoxical inverse relationship between MPJPE and joint angle accuracy that is consistent across joints, subjects, and model architectures.

MPJPE vs. Angle MAE

The central finding is an inverse relationship between spatial and angular accuracy. Ground truth poses yield 2.95° MAE. SimpleBaseline3D (58.5 mm MPJPE) achieves 19.21° angle MAE. VideoPose3D (46.8 mm) produces 21.77°. MotionBERT (37.2 mm) — the best model by MPJPE — yields the worst angle accuracy at 25.19°. The model ranking by MPJPE is exactly reversed when evaluated on joint angles.

Per-Joint Analysis

Upper limb joints are hardest: shoulder angles range from 17–33° MAE and elbow from 20–30° across models. Lower limbs are most reliable, with hip abduction at 8–12° and knee flexion at 13–18°. Critically, the inverse MPJPE–angle trend holds within each joint — MotionBERT is consistently worst and SimpleBaseline3D consistently best — confirming the pattern is not driven by a single problematic joint.

Per-Subject Consistency

Analyzing results within each AthletePose3D subject confirms the inverse relationship is not an artifact of subject-level averaging. MotionBERT produces the highest angle MAE for every individual subject despite having the lowest MPJPE. This per-subject consistency rules out explanations based on body-type confounds or dataset imbalance.

Key Outcomes

- Learned pose-to-angle mapping achieving 2.95° MAE on ground-truth poses, validating it as a reliable evaluation tool

- Demonstrated an inverse relationship between MPJPE and joint angle accuracy across three SOTA models

- Produced per-joint error analysis showing upper limbs (17–33°) are hardest and lower limbs (8–18°) most reliable

- Advocated for angle-based evaluation metrics to complement MPJPE in pose estimation benchmarks

Discussion

The inverse MPJPE–angle relationship challenges a foundational assumption in pose estimation and has concrete implications for how the community evaluates and trains models.

The MPJPE–Angle Paradox

We propose two mechanisms for the paradox. First, MPJPE-optimal error distributions may distort angular relationships: MPJPE is directionally insensitive, so a model can achieve low positional error while distributing that error in directions that maximally disrupt joint angles. Second, more expressive models (e.g., MotionBERT) learn strong priors from H36M's constrained lab movements that fail to generalize to athletic motion, producing spatially plausible but angularly incorrect poses in the AthletePose3D domain.

Implications for the CV Community

We advocate for reporting angle accuracy alongside MPJPE in pose estimation benchmarks, particularly when models are intended for biomechanical applications. The trained pose-to-angle mapping provides a lightweight, model-agnostic evaluation tool — any 3D pose estimator can be assessed on angular fidelity by passing its outputs through the network, requiring no additional motion capture infrastructure at evaluation time.

Future Work

The natural extension is biomechanically-constrained training: L_total = L_pos + λ · L_ang, where the angle loss is computed via the differentiable pose-to-angle mapping. This would allow lifting models to optimize directly for angular accuracy during training, potentially resolving the MPJPE–angle paradox by making the two objectives jointly visible to the optimizer.